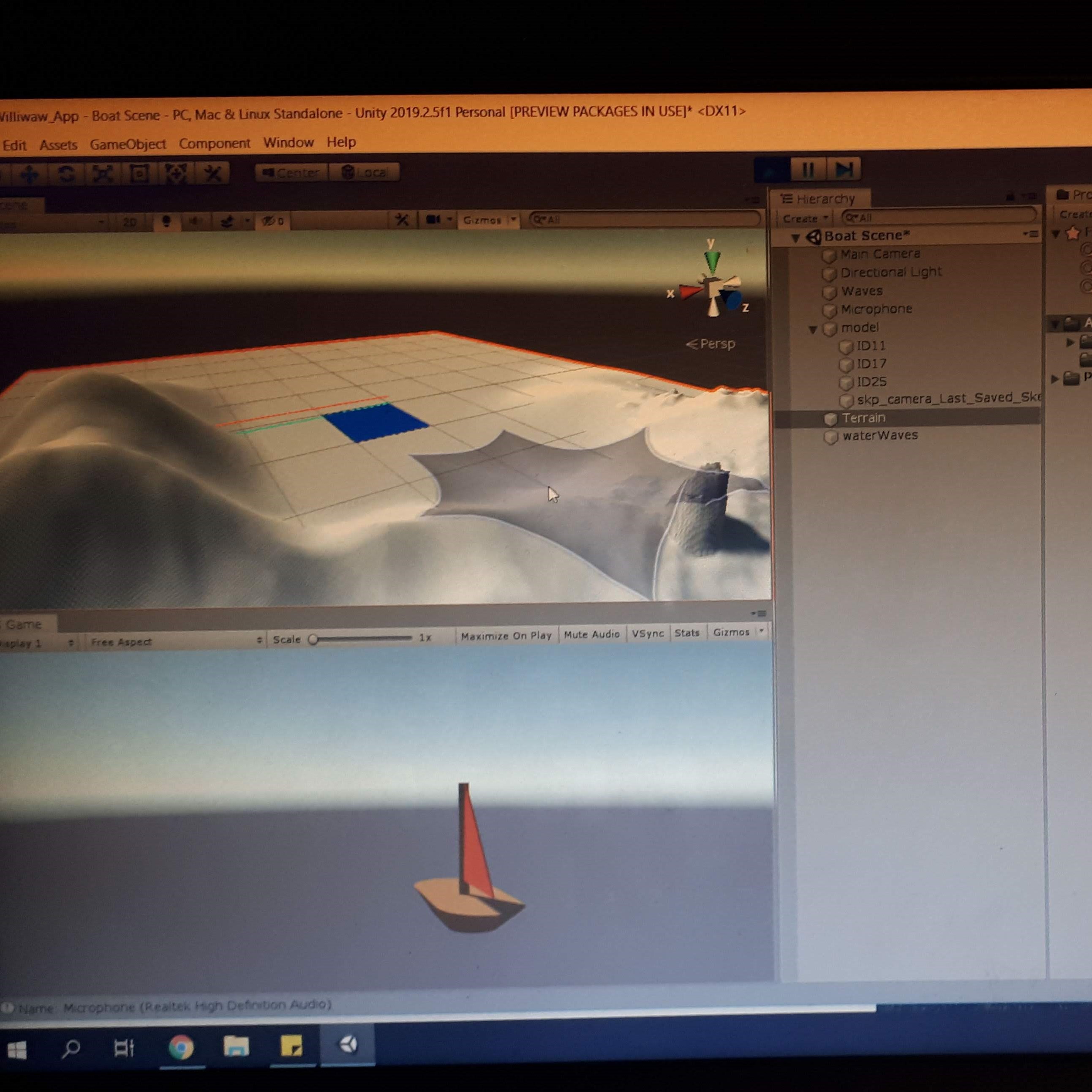

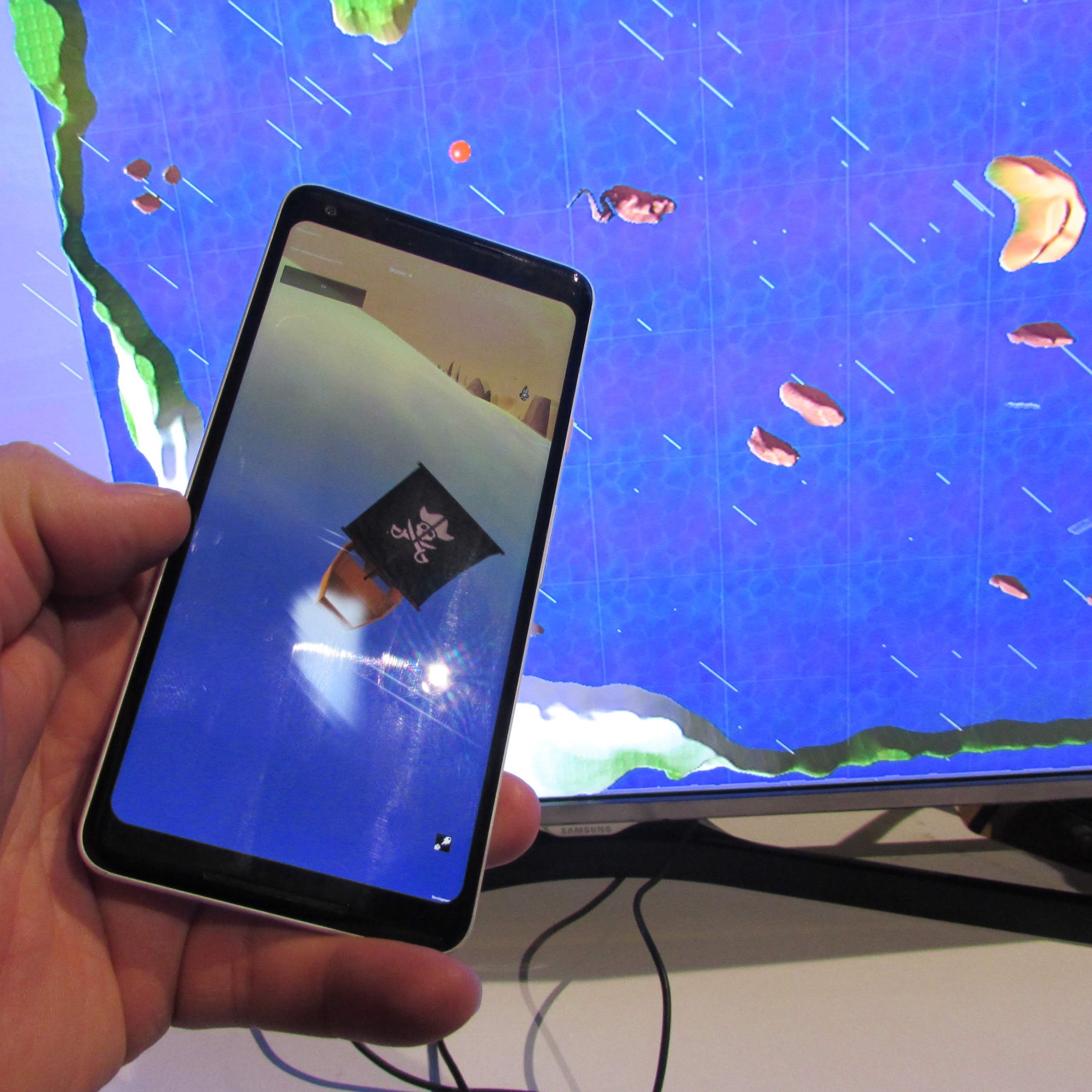

The Unity

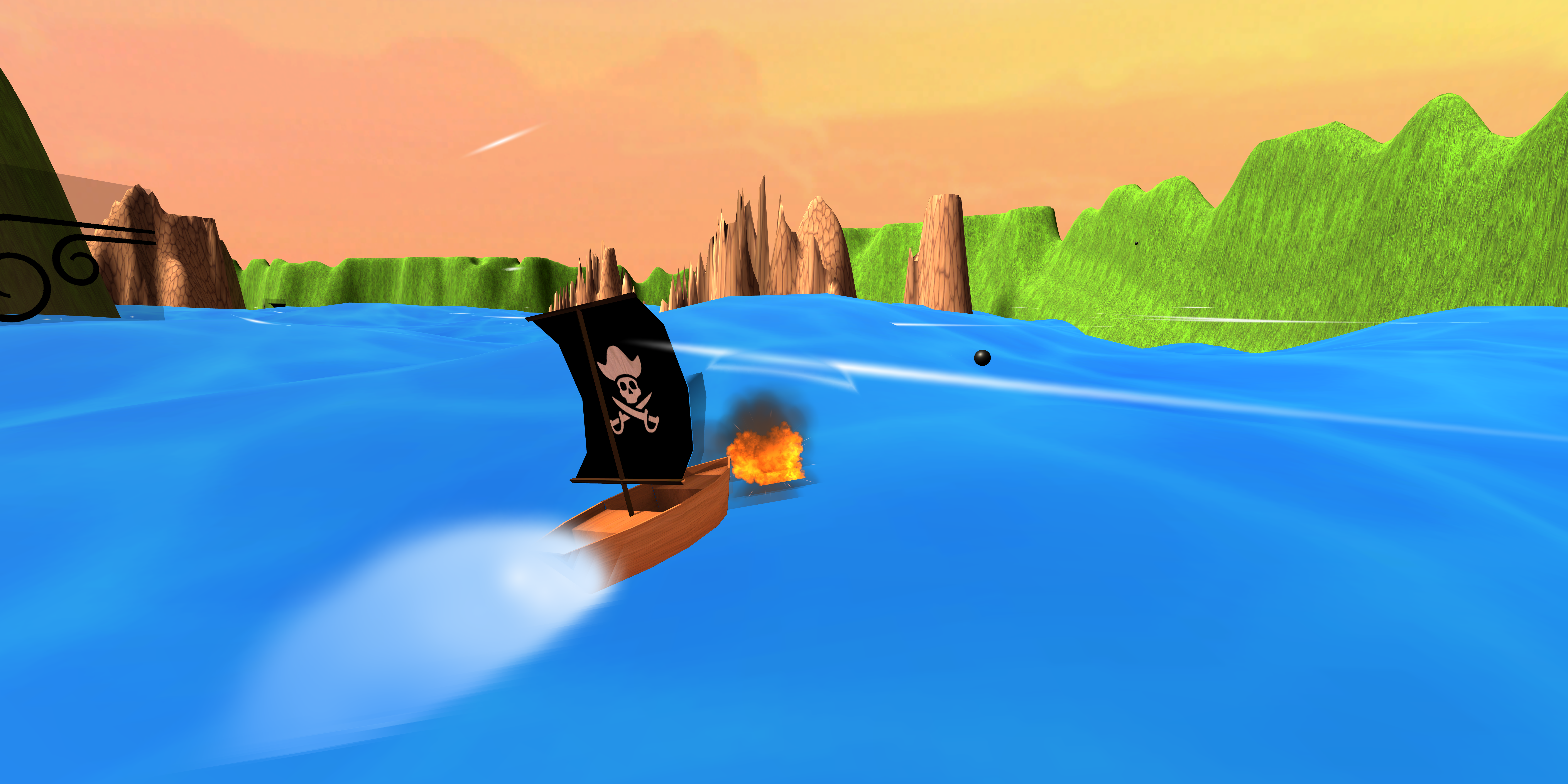

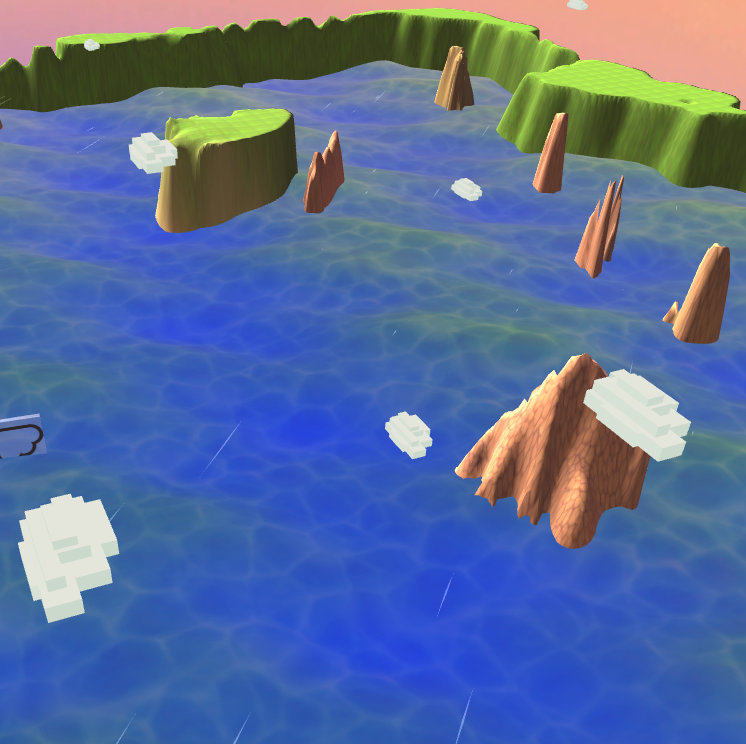

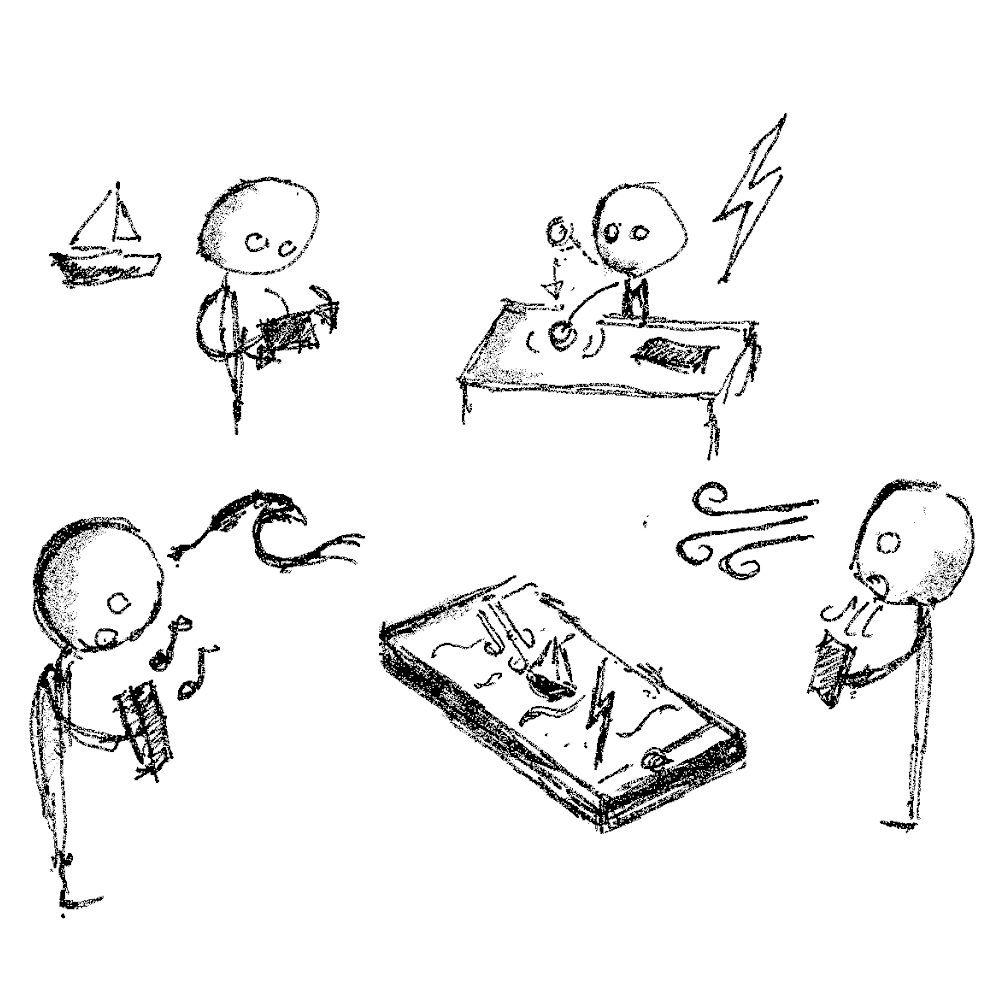

Asset Store was used to download and import ready-made assets,

such as clouds. Using Unity's Terrain Toolbox, a terrain object was created.

Then, Unity’s built-in Terrain Tools was used to shape the terrain

using a variety of brushes to raise/lower terrain, smooth height and

paint terrain. One end of the environment had snow textures painted on the

islands, and the other end desert textures, in order to simplify

localisation.

Assets were imported to create a custom skybox and to

place clouds in the sky, and a custom script was then created to

have the clouds move across the sky in-game. Lastly, an audio clip

was imported and attached to the boat camera as an audio source, in

order to simulate ambient sounds.